Google: Static Sign-Off Methodology & Results

DAC 2019 panel presentation by Hamid Shojaei of Google (edited transcript)

Case Study Overview

Hamid Shojaei of Google presents a case study on Google’s static sign-off methodology. Hamid covers best practices & results for RTL Linting, Single mode & Multimode clock domain crossing & Reset domain crossing. (Real Intent tools deployed)

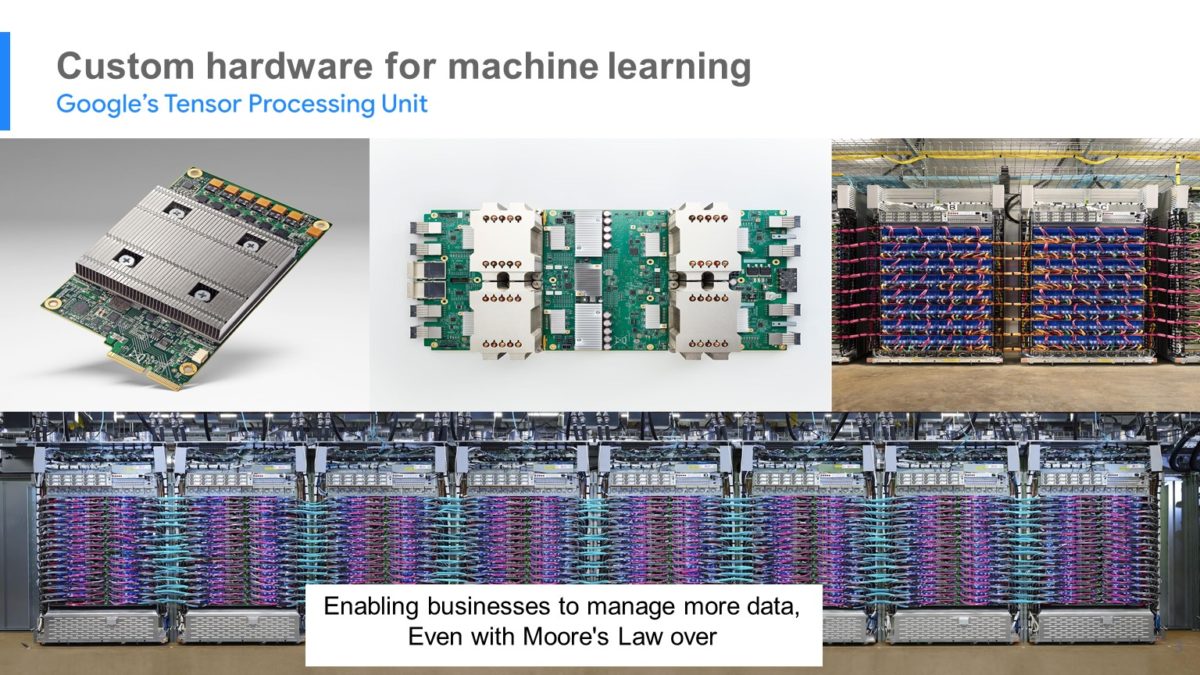

Google & Machine Learning, TPUs

Google aspires to create technologies that solve important problems — and we are relying on machine learning to help us reach our goal.

We believe these technologies can promote innovation and further our mission to organize the world’s information and make it universally accessible and useful.

To implement these algorithms and these advanced technologies, for many years we have been relying on Moore’s law to give us the computing power that we need. But as you know, Moore’s Law is in decline and we cannot hope to get larger speedups in terms of the board anymore.

That’s why, at Google, several years ago we started to work on a project. We made custom hardware for machine learning called Tensor Processing Unit or TPUs.

We have been using TPUs in our data center for several years and found them to give us an order of magnitude better optimized performance per watt for machine learning.

This is roughly equivalent to fast-forwarding technology about 7 years into the future — or 3 generations of Moore’s Law.

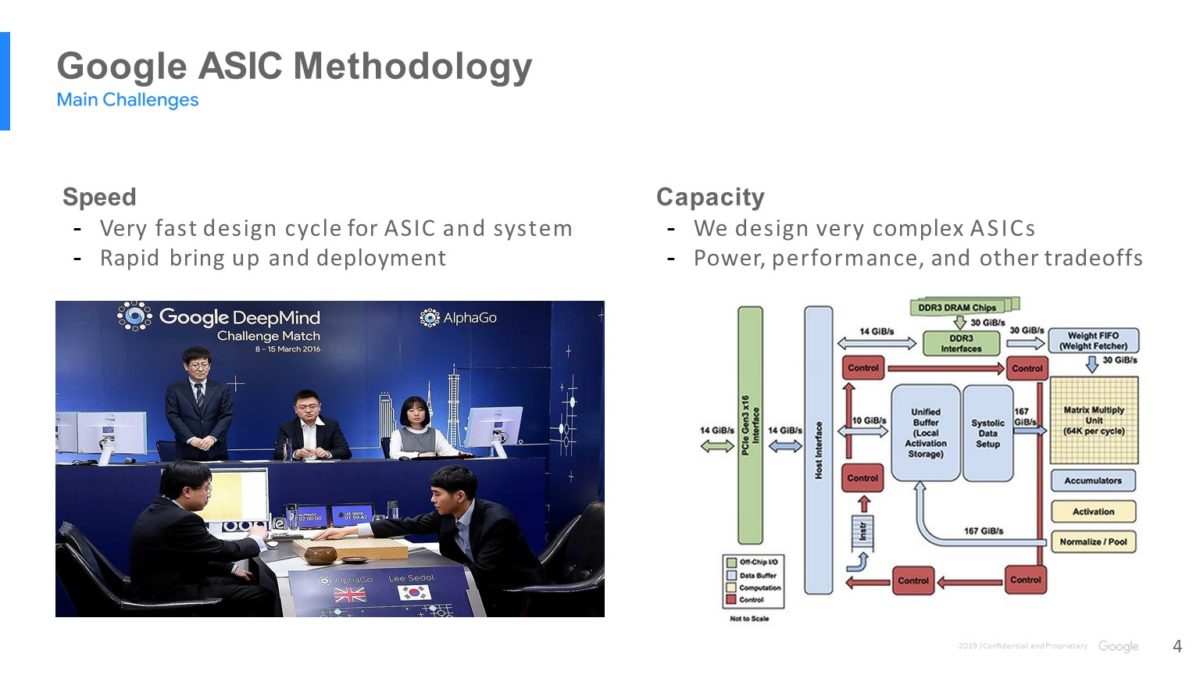

Google TPU Design Challenges

Let’s look at the challenges we are facing while making TPUs. The first is that our product cycle is short, and time-to-market is very important for us.

Our first TPU was for AlphaGo which was used in the Go contest in 2016 and was able to beat the top master Lee Sedol.

Since then we have made several generations of TPUs, and we have more generations to come. As you can see, we execute very fast and we need EDA tools that can cope with our schedule.

Another fact is that a TPU is a complex design; it has many blocks running with different clock frequencies having different power and performance requirements.

We also use advanced technologies to make our chip; for that we need a high level of collaboration and co-optimization between designers, the process, and the tool developers.

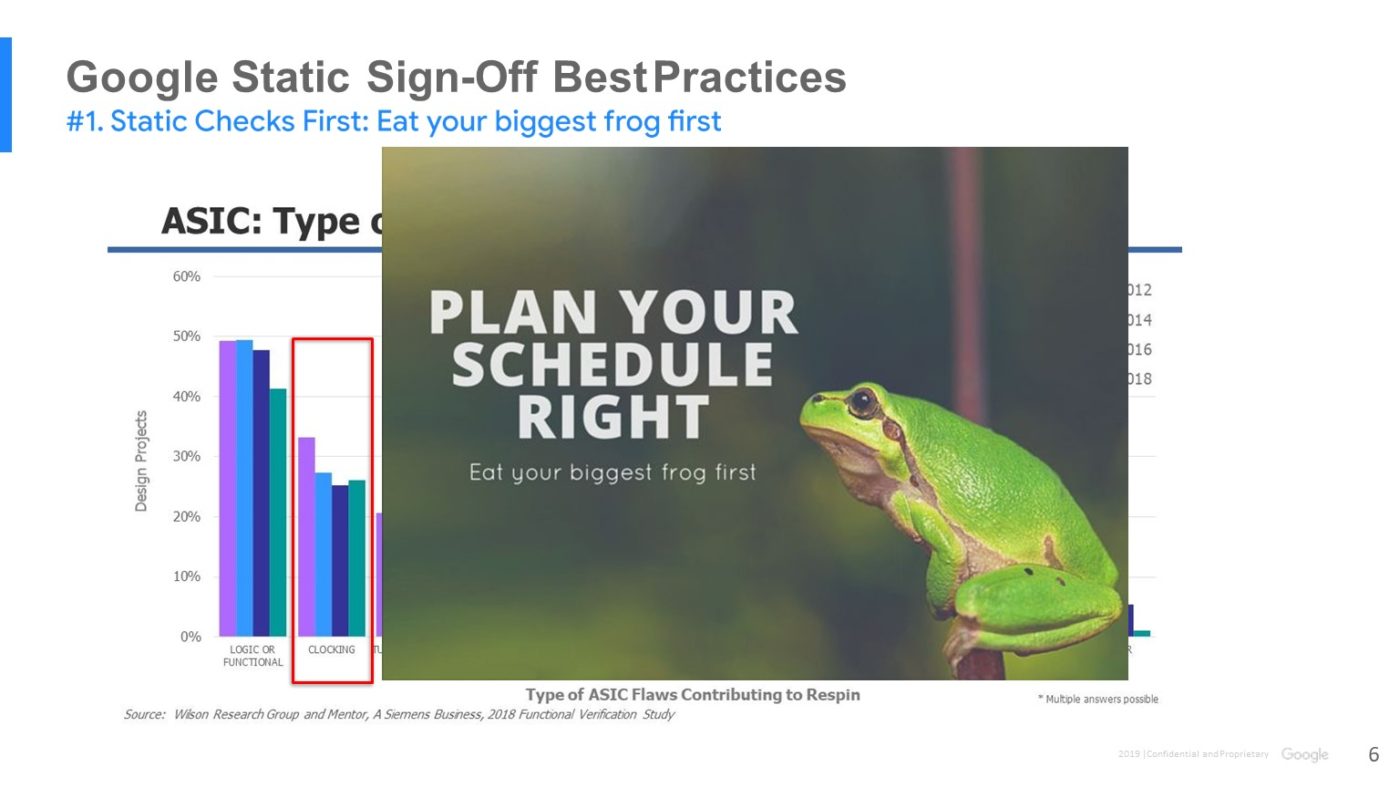

Clocking is Primary ASIC Design Challenge

Let’s look at this chart from Wilson research. According to this research, the main type of flaws contributing to respins are functional bugs and clocking issues — and for clocking, CDC verification is very important.

For both categories, static sign-off / static verification is very important and helps a lot. However, the issue is that for the ASIC design community, static sign-off is usually the last stage and there is no time to ensure quality and robustness.

How do we address this issue?

Static Sign-Off Best Practices

#1: Run Static Checks First

As engineers, sometimes we need to go to therapy — and if you don’t need to you should be proud of yourself. But if you do there is a common sentence that they always give you:

“If it’s your job to eat a frog, it’s best to eat it first thing in the morning. And if it’s your job to eat two frogs, it’s best to eat the biggest one first.”

At Google we try to listen to this advice. We know static sign-off is very challenging and time consuming, so we start as early as possible — from day 1 of RTL design.

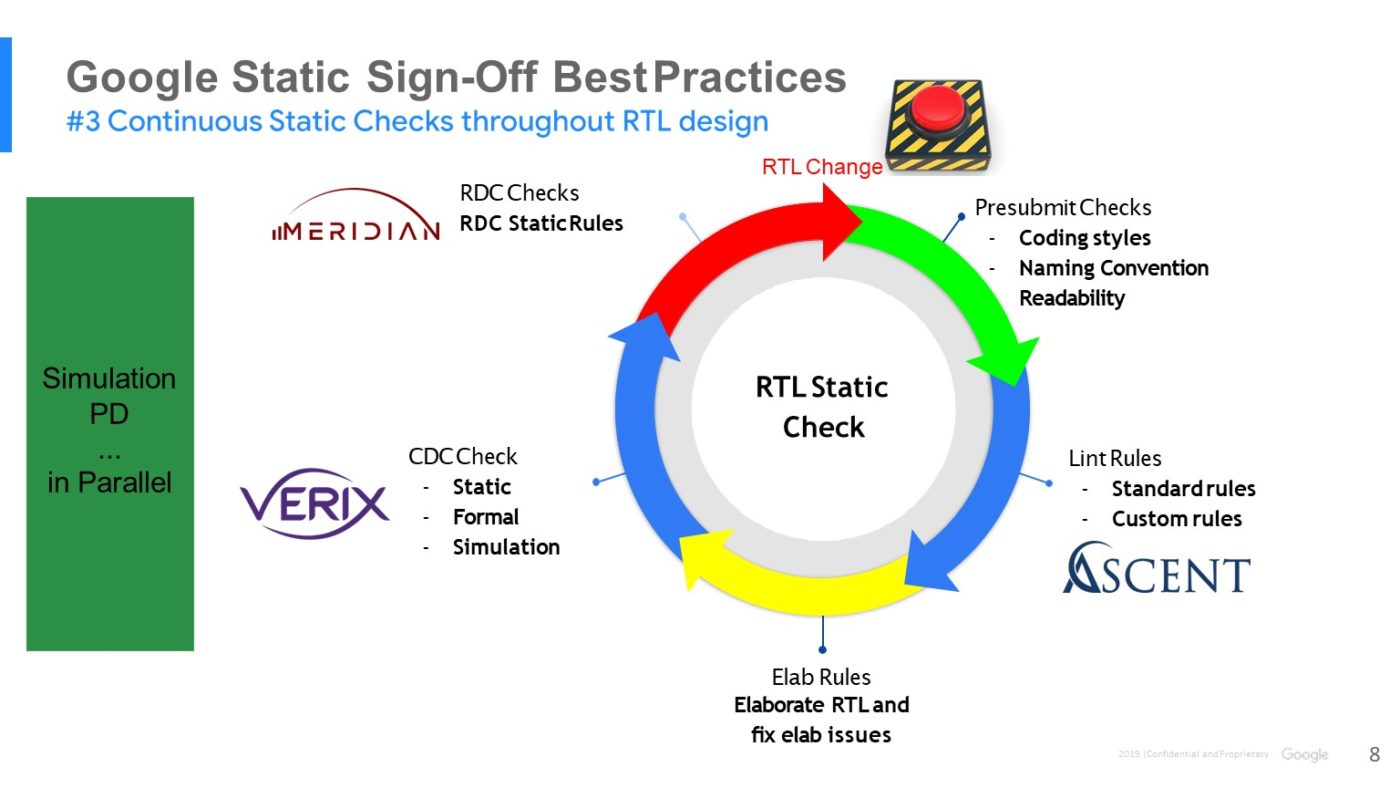

#2: Run Broad Range of Static Checks

This slide shows the big picture of the different static verification checks that we run on our code. Before we submit our code, we have “pre-submit”, which run some basic checks on our code.

Then we have linting, in which we have standard rules, as well as some custom rules that we have developed internally. Elaboration is next to make sure that RTL does not have any elab issues.

We use a combination of static and dynamic approaches for CDC verification. And we also run RDC (reset domain crossing).

#3: Run Continuous Static Checks

Please note that all these tasks are done in parallel and are independent of our functional verification. Sometimes we run them even before we do functional verification.

Another key advantage is that this process is early and continuous.

This means that any changes in RTL will kick off the whole procedure – the entire procedure will be triggered, and we run all the tasks in parallel again.

Google’s Results: Found More bugs, Reduced Late Changes

Let’s talk about the impact of this early and continuous process. I’ll talk about CDC as one of the main challenges in the static sign-off.

CDC verification used to be the last thing in our design cycle — and I believe many companies are in the same boat.

For one of our projects everything was done, except CDC. We started to run CDC verification and the tool was reporting over 50,000 violations on one of our complex designs. Of course, many of the violations were just false failures, noise, and missing constraints. But good luck reviewing 50,000 violations, and then making sure the design is CDC safe. As you can imagine, that’s not feasible.

That’s why we decided to change our strategy and eat our biggest frog first.

- Now we have a nightly regression in which we run static tools on all the blocks every night from day one of our RTL design.

- The results are parsed, and our dashboard gets updated.

- As part of our continuous process, we will even file a bug and send an email to the corresponding designer if a block is failing.

- We keep the dashboard clean throughout the project lifetime; as you can see it’s not easy for bugs to slip past our methodology and find their way into the later stages of RTL design.

This continuous process is reducing the noise level as one of the most pressing challenges in RTL sign-off.

The results are: 1) significant reduction of later stage RTL changes, and 2) cutting at least a month of chip development time when compared to our previous methodology.

Google’s Must Haves Moving Forward

In terms of what we must have, we are looking to the future and thinking about possible approaches that can help us develop our next generation complex chips. We are collaborating with EDA vendors — including for static tools — to make it happen.

One is EDA on the cloud. We are facing more than a $100 billion investment in cloud infrastructure every year. At Google we have a unique advantage because we own vast computing resources on the cloud, as well as fast networking and lots of cloud storage.

So, we use these resources for EDA flows and move our EDA flows to the cloud to get scalability, elasticity, reliability and security; all of them are very important for us.

Another trend is based on the fact that we have a unique advantage at Google due to our massively-scaled detailed logs and because Google is the first AI company. In the past decade, hardware and systems have significantly transformed machine learning.

Now it’s time for machine learning to transform the system. Seventy percent of the design cycle involves human in the loop; by using machine learning we believe we can reduce that and have faster design cycles and cheaper chips.

Thank you very much. I will be happy to take any questions at the end and by the way we have openings in our team, and we need more bright people to do all this cool stuff. So, please come and join us. Thank you.

Q&A: Multimode Clock Domain Crossing

Question:

From your experience, why would you use multi-mode CDC over single-mode CDC?

Hamid’s response:

That’s a good question. Actually, in our design we use a lot of clock muxes, by which we want to choose different clocks.

We have different modes of operation, and based on that we can choose which clock to use for that IP. Plus, sometimes we also make our IP very general so that we can use it for different purposes — maybe for different chips we need different clock frequencies.

For all of these, we need to have support for clock muxes, and that’s why we need to have multi-mode support — so that we don’t want to repeat the same strategy for different modes. That’s the nice thing about [Real Intent] Verix CDC and we decided to switch to that because it can do it in one shot.

Otherwise, we’d have to run Meridian CDC with every mode and review that.